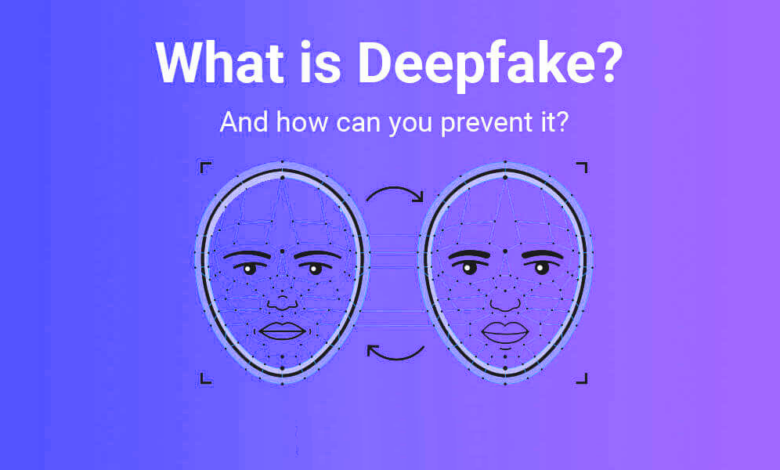

What is a Deepfake Technology and How Can We Prevent Deepfakes?

- Deepfakes employ artificial intelligence to replace the likeness of one person in the video and other digital media with that of another.

- Deepfake technology has raised fears that it could be used to make fake news and deceptive, counterfeit videos.

- Here’s a crash course on deepfakes: what they are, how they function, and how to spot them.

Computers have been improving their ability to simulate reality. In place of the genuine settings and props that were once ubiquitous, the modern film depends significantly on computer-generated sets, scenery, and characters, and most of the time these scenes are largely indistinguishable from reality.

Deepfake technology has recently made the news. Deepfakes, the most recent generation of computer imaging, are formed when artificial intelligence (AI) is designed to substitute one person’s likeness in recorded video with another.

Read More: 5 Ways Artificial Intelligence (AI) Will Transform Digital Marketing in 2022

What exactly is a deepfake and how does it work efficiently?

The name “deepfake” is derived from the underlying AI technology of “deep learning.” Deep learning algorithms are used to swap faces in the video and digital content to create realistic-looking false media. Deep learning algorithms train themselves how to solve issues when given vast volumes of data.

Deepfakes can be created in a variety of ways, but the most frequent is to use deep neural networks with autoencoders that use a face-swapping methodology. You’ll need a target video to utilize as the deepfake’s foundation, as well as a collection of video clips of the individual you wish to place in the target.

The videos can be completely unrelated; for example, the target could be a clip from a Hollywood film, while the films of the person you wish to include in the film could be random YouTube clips.

The autoencoder is a deep learning AI software tasked with analyzing video clips to determine how a person appears from various angles and environments and then mapping that person onto the individual in the target video using common traits.

Another type of machine learning, known as Generative Adversarial Networks (GANs), is added to the mix, which finds and improves any errors in the deepfake over numerous rounds, making it more difficult for deepfake detectors to decode it.

GANs are also a popular way for creating deepfakes, as they rely on the analysis of massive amounts of data to “learn” how to build new examples that closely resemble the real thing, with painfully realistic results.

Several programs and software, such as the Chinese app Zao, DeepFace Lab, FaceApp (a picture editing tool with built-in AI techniques), Face Swap, and the now-defunct DeepNude, which generated false nude photographs of women, make creating deepfakes simple even for amateurs.

On GitHub, a software development open source community, a significant number of deepfake software may be found. Some of these programs are used solely for entertainment, which is why deepfake creation isn’t prohibited, while others are significantly more likely to be used maliciously.

Many experts fear that as technology advances, deepfakes will become significantly more sophisticated, posing more substantial concerns to the public, such as election meddling, political unrest, and additional criminal activities.

What is the deepfakes technology used?

While the capacity to swap faces automatically to create genuine and realistic-looking synthetic video has some interesting benign applications (such as in cinema and gaming), it is clearly a dangerous technology with some problematic applications. Synthetic pornography was one of the first applications of deepfakes in the real world.

In 2017, a Reddit user titled “deepfakes” built a pornographic forum with actors who had their faces altered. Pornography (especially revenge pornography) has frequently entered the headlines since then, severely hurting the reputations of celebrities and public people. Pornography accounted for 96 percent of deepfake videos discovered online in 2019, according to a Deeptrace analysis.

Politicians have also employed deepfake video. In 2018, a Belgian political party, for example, released a video of Donald Trump giving a speech in which he called for Belgium to leave the Paris climate agreement. Trump, on the other hand, never gave the speech; it was a ruse. That wasn’t the first time a deepfake was used to generate deceptive videos, and tech-savvy political experts are expecting a fresh wave of fake news with convincingly realistic deepfakes in the future.

Of course, not all deepfake video represents a threat to democracy’s survival. Chips that answer queries like what would Nicolas Cage look like if he appeared in “Raiders of the Lost Ark”?

Are deepfakes limited to videos?

Deepfakes aren’t only about videos. Deepfake audio is a rapidly expanding field with numerous uses.

With just a few hours (or in some cases, minutes) of audio of the person whose voice is being cloned, realistic audio deepfakes can now be created using deep learning algorithms, and once a model of a voice is created, that person can be made to say anything, as was the case last year when fake audio of a CEO was used to commit fraud.

Deepfake audio has medical uses in the form of voice replacement, as well as in computer game design, where programmers may now allow in-game characters to speak anything in real-time instead of depending on a limited set of scripts recorded before the game was released.

How to detect a deepfake video?

As deepfakes grow more frequent, society as a whole will have to adapt to spotting them in the same way that online users have adapted to spotting other types of fake news.

In many cases, such as in cybersecurity, more deepfake technology is required to identify and prevent it from spreading, which can lead to a vicious circle and potentially cause more harm.

Deepfakes can be identified by a number of indicators:

- Current deepfakes struggle to realistically animate faces, resulting in videos where the subject never blinks or blinks far too frequently or unnaturally. New deepfakes were produced that did not have this problem after researchers at the University of Albany published a paper finding the blinking irregularity.

- Look for issues with the skin or hair, as well as faces that appear to be blurrier than the surroundings. It’s possible that the focus is too soft.

- Does the lighting appear to be artificial? The lighting of the clips used as models for the false video is frequently retained by deepfake algorithms, which is often a poor match for the lighting in the target video.

- The audio may not appear to fit the person, especially if the video was manufactured but the actual audio was not.

Read More: How Artificial Intelligence is Changing the Face of the Education Sector

How to Use Technology to Fight Deepfakes

For biometric-based recognition solutions, liveness detection is an excellent feature. It can detect any spoofing effort by determining whether the source of a biometric sample is a real person or a faked image.

Identity verification, lip-sync authentication, and biometric facial recognition are all part of this technology. As a result, the chances of a spoofing effort are greatly reduced.

Users are frequently asked to modify their facial expressions or glance in different directions during liveness tests. You might be prompted to do things like blink, smile, or frown. Authentication, in addition to speech, is an important aspect of liveness detection. Deepfakes can’t readily get past liveness tests as a result.

While deepfakes will only become more realistic over time as skills improve, we aren’t completely powerless against them. A number of companies, including numerous startups, are researching approaches for detecting deepfakes.

Sensity, for example, has created a deepfake detection tool that works like an antivirus and sends users an email when they’re watching something with AI-generated synthetic media fingerprints. Sensity employs the same deep learning techniques as the bogus video makers.

To detect deepfakes, Operation Minerva utilizes a more straightforward way. The algorithm used by this company compares possible deepfakes to previously “digitally fingerprinted” videos. It may detect revenge porn, for example, by recognizing that the deepfake video is essentially a changed version of one that Operation Minerva has already cataloged.

Facebook also organized the Deepfake Detection Challenge last year, an open, collaborative endeavor to encourage the development of new systems for detecting deepfakes and other types of altered media. The tournament offered cash rewards of up to $500,000.

One Comment